Meet Histoboard: Your interactive map for navigating foundation models for pathology

Foundation models are rapidly reshaping computational pathology, but comparing their performance across studies remains difficult.

Enter Histoboard: a living platform that aggregates published benchmark results to help researchers and clinicians navigate this evolving landscape.

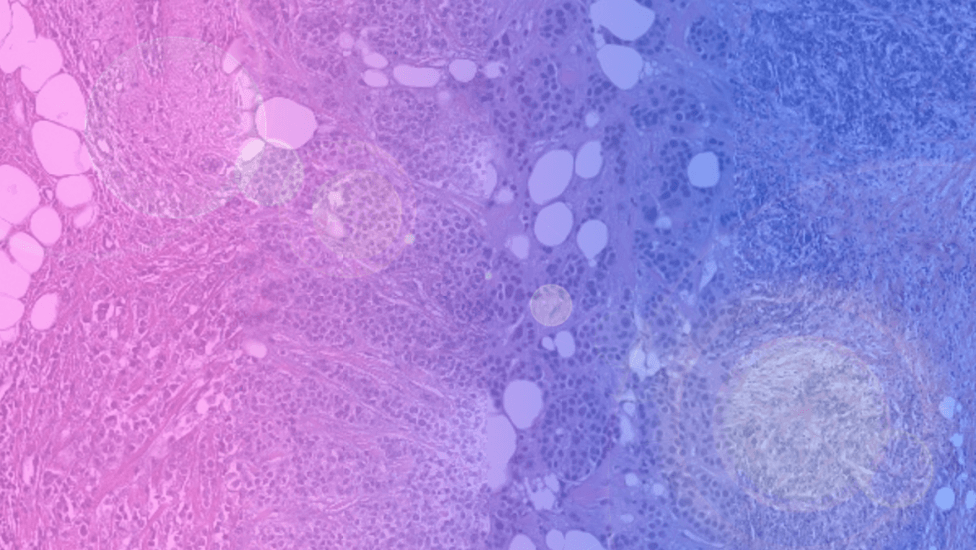

Over the past decade, pathology has been undergoing a profound digital transformation. Glass slides are steadily giving way to whole-slide scanners, and tissue sections that once lived only under microscopes are now stored as gigapixel images. This shift is approaching an inflection point where real-world clinical utility is becoming tangible.

At the same time, this digital transformation has unlocked conditions for a new generation of artificial intelligence systems: foundation models trained at unprecedented scale on histopathology data. Their promise is substantial: to learn general-purpose representations of tissue that can support a broad spectrum of applications, from research and translational discovery to the development of diagnostic and prognostic tools. Histoboard was created to help researchers and clinicians make sense of this rapidly evolving ecosystem of pathology foundation models.

Scaling foundation model innovation

Foundation models depart from the traditional paradigm of task-specific training. Instead of building a separate neural network for each clinical objective, researchers first train large models on vast collections of digitized slides, often using self-supervised learning. In doing so, the system acquires a broad visual vocabulary of tissue architecture - how nuclei cluster, how glands form, how tumor and stroma interact, and how staining varies across laboratories. Once pretrained, these models can be adapted to downstream tasks such as cancer detection, tumor grading, biomarker prediction or survival modeling with comparatively modest labeled datasets [1-3].

Efforts to build such models have proliferated in both academia and industry. For example, UNI [1] was trained on millions of pathology tiles derived from over 100,000 slides to learn generalized representations useful across many histopathology tasks. Virchow [2], one of the largest pathology foundation models to date, showed that a pan-cancer representation could achieve high performance for rare and common cancers alike, even with limited task-specific training labels. GigaPath [4] represents another substantial foundation model, trained on over 171,000 slides and evaluated on genomic prediction and subtyping tasks. These initiatives reflect the diversity of approaches in the field and the ambition to scale both data and model capacity. More recently, H-Optimus-1 [5], Atlas2 [6] or Virchow2 [7] now count over a billion parameters and were trained from 1 to 5 million whole slide images.

Such rapid innovation has produced impressive results, but it has also revealed a structural weakness: the benchmarking landscape in pathology AI is fragmented. Unlike natural image recognition or language modeling, where standardized datasets such as ImageNet or LMArena offer clear points of comparison, computational pathology has no universally adopted suite of evaluation benchmarks spanning tasks, organs and institutions. Many models are tested on private datasets with proprietary splits; others report metrics that are not directly comparable. Differences in preprocessing, tile extraction, stain normalization and evaluation frameworks further confound meaningful comparison. What counts as “state-of-the-art” in one paper might not be tested under the same experimental conditions as competing models in another.

This situation has been described by Faisal Mahmood as part of a broader benchmarking crisis in biomedical machine learning [8]. In an opinion piece published in Nature Medicine, Mahmood argued that the lack of standardized benchmarks and shared evaluation protocols impedes progress and obscures our ability to assess real advances in model performance and generalizability. Without agreed-upon tasks, metrics, and clinically relevant open datasets, comparisons across studies risk becoming arbitrary and misleading.

Recognizing this gap, several initiatives have emerged to standardize evaluation. Patho-Bench [9], developed by Mahmood’s lab, provides canonical train-test splits across hundreds of public datasets and a consistent suite of evaluation protocols for various tasks. PathBench [10], another benchmarking effort, offers multi-center (yet, private) datasets and rigorous evaluation protocols spanning clinically relevant tasks. THUNDER [11] or EVA [12] also set new standards for usability, reproducibility, and evaluation coverage. These kinds of initiatives aim to give researchers a shared set of tasks and metrics against which models can be fairly compared, fostering transparency and cumulative progress.

Histoboard does not introduce a new dataset or evaluation protocol; rather, it collates what is already known. By aggregating reported results from published pathology benchmarks into a single, structured interface, it offers a consolidated view of how foundation models perform across organs, tasks and datasets. Models can be viewed in aggregate, compared directly, or examined within specific benchmarks. The platform does not re-evaluate models or modify reported numbers; it functions as a transparent index of the literature.

The interface has three main components:

- The Leaderboard provides an overall ranking of foundation models based on aggregate performance across multiple tasks and datasets.

- The Arena allows users to compare any two models head-to-head, filtering by organ, task type, or metric, which helps highlight model strengths in specific clinical scenarios.

- Benchmark Pages present detailed task-level results, including links to the original publications and datasets.

Histoboard is designed as a living, open platform that will be continuously updated with new models and benchmark results. While it does not replace rigorous validation or resolve methodological inconsistencies across studies, it provides a practical tool for aggregating results, identifying gaps, and highlighting trends across pathology foundation models. As these models continue to scale and expand across research, translational discovery, and clinical applications, the ability to systematically compare and interpret their performance becomes essential. Histoboard aims to contribute to the emerging infrastructure needed to make foundation models more transparent, comparable, and ultimately ready for real-world clinical adoption.

The promise of foundation models in pathology extends beyond incremental improvements in individual tasks. These models have the potential to unify clinical workflows across organs, integrate multimodal data, and provide robust representations that accelerate downstream research. The question facing the field is no longer whether foundation models will transform pathology, but which models are truly mature, and how we can reliably know it.

Authors

References

[1] Chen, R.J., Ding, T., Lu, M.Y. et al. Towards a general-purpose foundation model for computational pathology. Nat Med 30, 850–862 (2024). https://doi.org/10.1038/s41591-024-02857-3

[2] Vorontsov, E., Bozkurt, A., Casson, A. et al. A foundation model for clinical-grade computational pathology and rare cancers detection. Nat Med 30, 2924–2935 (2024). https://doi.org/10.1038/s41591-024-03141-0

[3] Campanella, G., Hanna, M.G., Geneslaw, L. et al. Clinical-grade computational pathology using weakly supervised deep learning on whole slide images. Nat Med 25, 1301–1309 (2019). https://doi.org/10.1038/s41591-019-0508-1

[4] Xu, H., Usuyama, N., Bagga, J. et al. A whole-slide foundation model for digital pathology from real-world data. Nature 630, 181–188 (2024). https://doi.org/10.1038/s41586-024-07441-w

[5] Bioptimus. (2025). H-optimus-1. Hugging Face. https://huggingface.co/bioptimus/H-optimus-1

[6] Alber, M. et al. Atlas 2 - Foundation models for clinical deployment. arXiv (2026). https://doi.org/10.48550/arXiv.2601.05148

[7] Zimmermann, E. et al. Virchow2: Scaling self-supervised mixed magnification models in pathology. arXiv (2024). https://doi.org/10.48550/arXiv.2408.00738

[8] Mahmood, F. A benchmarking crisis in biomedical machine learning. Nat Med 31, 1060 (2025). https://doi.org/10.1038/s41591-025-03637-3

[9] Zhang, A., Jaume, G., Vaidya, A., Ding, T., & Mahmood, F. Accelerating data processing and benchmarking of AI models for pathology. arXiv (2025). https://arxiv.org/abs/2502.06750

[10] Ma, J. et al. PathBench: A comprehensive comparison benchmark for pathology foundation models towards precision oncology. arXiv (2025). https://doi.org/10.48550/arXiv.2505.20202

[11] Marza et al. THUNDER: Tile-level Histopathology image UNDERstanding benchmark. arXiv (2026). https://arxiv.org/abs/2507.07860

[12] kaiko.ai et al. eva: Evaluation framework for pathology foundation models. MIDL (2024). https://openreview.net/forum?id=FNBQOPj18N

Histoboard was originally developed by Alexandre Filiot, Senior Data Scientist at Waiv, in 2026. The initiative is sponsored by Waiv with support from the Jaume Lab.